GRATIS

Harvard vía Independent

GRATISIntroduction to AI in the Data Center

Acerca de este curso

- Introduction to GPU Computing | NVIDIA Training

- In this module you will see AI use cases in different industries, the concepts of AI, Machine Learning (ML) and Deep Learning (DL), understand what a GPU is, the differences between a GPU and a CPU.

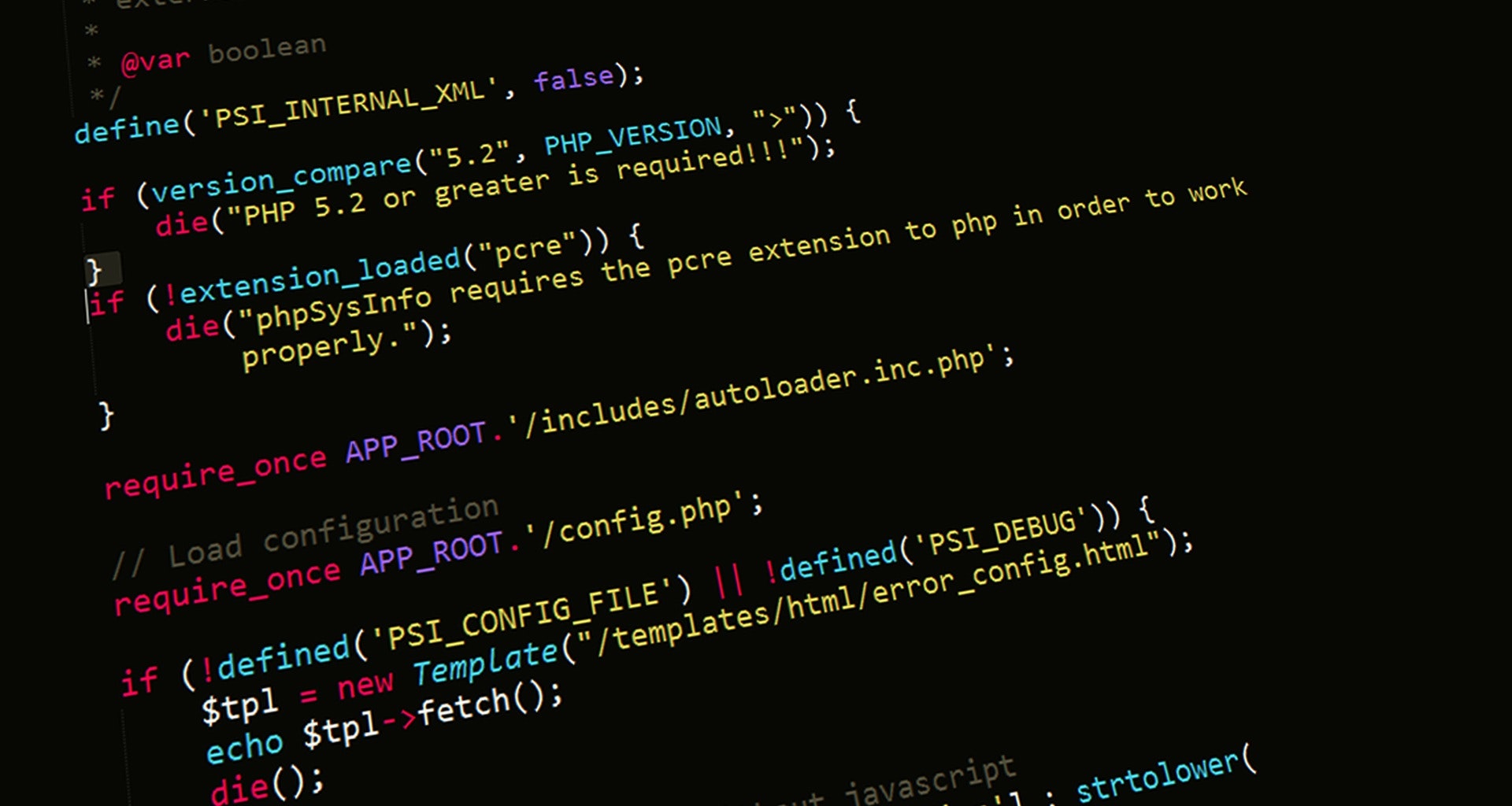

You will learn about the software ecosystem that has allowed developers to make use of GPU computing for data science and considerations when deploying AI workloads on a data center on prem, in the cloud, on a hybrid model, or on a multi-cloud environment. - Rack Level Considerations | NVIDIA Training

- In this module we will cover rack level considerations when deploying AI clusters.

You will learn about requirements for multi-system AI clusters, storage and networking considerations for such deployments, and an overview of NVIDIA reference architectures, which provide best practices to design systems for AI workloads. - Data Center Level Considerations | NVIDIA Training

- This unit covers data center level considerations when deploying AI clusters, such as infrastructure provisioning and workload management, orchestration and job scheduling, tools for cluster management and monitoring, and power and cooling considerations for data center deployments.

Lastly, you will learn about AI infrastructure offered by NVIDIA partners through the DGX-ready data center colocation program.

- Course Completion Quiz - Introduction to AI in the Data Center

- It is highly recommended that you complete all the course activities before you begin the quiz.

Good luck!

Cursos relacionados

GRATIS Aprendiendo a aprender: Poderosas herramientas mentales…

Deep teaching solutions

Español

GRATIS Programación para todos (Introducción a Python)

University of Michigan

Inglés

GRATIS The Science of Well-Being

Yale

Inglés

GRATIS Negociación exitosa: Estrategias y habilidades esenciales

University of Michigan

Inglés

GRATIS Primeros Auxilios Psicológicos (PAP)

Universitat Autónoma de Barcelona

Español